Intel's Pentium Extreme Edition 955: 65nm, 4 threads and 376M transistors

by Anand Lal Shimpi on December 30, 2005 11:36 AM EST- Posted in

- CPUs

Literally Dual Core

One of the major changes with Presler is that unlike Smithfield, the two cores are not a part of the same piece of silicon. Instead, you actually have a single chip with two separate die on it. By splitting the die in two, Intel can reduce total failure rates and even be far more flexible with their manufacturing (since one Presler chip is nothing more than two Cedar Mill cores on a single package).

In order to find out if there was an appreciable increase in core-to-core communication latency, we used a tool called Cache2Cache, which Johan first used in his series on multi-core processors. Johan's description of the utility follows:

Not only did we not find an increase in latency between the two cores on Presler, communication actually occurs faster than on Smithfield. We made sure that it had nothing to do with the faster FSB by clocking the chip at 2.8GHz with an 800MHz FSB and repeated the tests only to find consistent results.

We're not sure why, but core-to-core communication is faster on Presler than on Smithfield. That being said, a difference of less than 9ns just isn't going to be noticeable in the real world - given that we've already seen that the Athlon 64 X2's 100ns latency doesn't really help it scale better when going from one to two cores.

One of the major changes with Presler is that unlike Smithfield, the two cores are not a part of the same piece of silicon. Instead, you actually have a single chip with two separate die on it. By splitting the die in two, Intel can reduce total failure rates and even be far more flexible with their manufacturing (since one Presler chip is nothing more than two Cedar Mill cores on a single package).

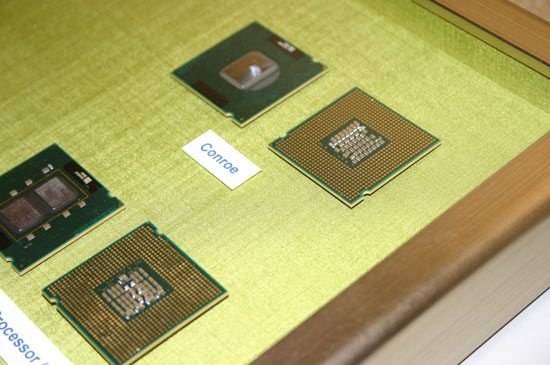

The chip at the bottom of the image is Presler; note the two individual cores.

In order to find out if there was an appreciable increase in core-to-core communication latency, we used a tool called Cache2Cache, which Johan first used in his series on multi-core processors. Johan's description of the utility follows:

"Michael S. started this extremely interesting thread at the Ace's hardware Technical forum. The result was a little program coded by Michael S. himself, which could measure the latency of cache-to-cache data transfer between two cores or CPUs. In his own words: "it is a tool for comparison of the relative merits of different dual-cores."Armed with Cache2Cache, we looked at the added latency seen by Presler over Smithfield:

"Cache2Cache measures the propagation time from a store by one processor to a load by the other processor. The results that we publish are approximately twice the propagation time. For those interested, the source code is available here."

| Cache2Cache Latency in ns (Lower is Better) | |

| AMD Athlon 64 X2 4800+ | 101 |

| Intel Smithfield 2.8GHz | 253.1 |

| Intel Presler 2.8GHz | 244.2 |

Not only did we not find an increase in latency between the two cores on Presler, communication actually occurs faster than on Smithfield. We made sure that it had nothing to do with the faster FSB by clocking the chip at 2.8GHz with an 800MHz FSB and repeated the tests only to find consistent results.

We're not sure why, but core-to-core communication is faster on Presler than on Smithfield. That being said, a difference of less than 9ns just isn't going to be noticeable in the real world - given that we've already seen that the Athlon 64 X2's 100ns latency doesn't really help it scale better when going from one to two cores.

84 Comments

View All Comments

Anand Lal Shimpi - Friday, December 30, 2005 - link

I had some serious power/overclocking issues with the pre-production board Intel sent for this review. I could overclock the chip and the frequency would go up, but the performance would go down significantly - and the chip wasn't throttling. Intel has a new board on the way to me now, and I'm hoping to be able to do a quick overclocking and power consumption piece before I leave for CES next week.Take care,

Anand

Betwon - Friday, December 30, 2005 - link

We find that it isn't scientific. Anandtech is wrong.

You should give the end time of the last completed task, but not the sum of each task's time.

For expamle: task1 and task2 work at the same time

System A only spend 51s to complete the task1 and task2.

task1 -- 50s

task2 -- 51s

System B spend 61s to complete the task1 and task2.

task1 -- 20s

task2 -- 61s

It is correct: System A(51s) is faster than System B(61s)

It is wrong: System A(51s+50s=101s) is slower than System B(20s+61s=81s)

tygrus - Tuesday, January 3, 2006 - link

The problem is they don't all finish at the same time and the ambiguous work of a FPS task running.You could start them all and measure the time taken for all tasks to finish. That's a workload but it can be susceptible to the slowest task being limited by its single thread performance (once all other tasks are finished, SMP underutilised).

Another way is for tasks that take longer and run at a measurable and consistent speed.

Is it possible to:

* loop the tests with a big enough working set (that insures repeatable runs);

* Determine average speed of each sub-test (or runs per hour) while other tasks are running and being monitored;

* Specify a workload based on how many runs, MB, Frames etc. processed by each;

* Calculate the equivalent time to do a theoretical workload (be careful of the method).

Sub-tasks time/speed can be compared to when they were run by themselves (single thread, single active task). This is complicated by HyperThreading and also multi-threaded apps under test. You can work out the efficiency/scaling of running multiple tasks versus one task at a time.

You could probably rejig the process priorities to get better 'Splinter Cell' performance.

Viditor - Saturday, December 31, 2005 - link

Scoring needs to be done on a focused window...By doing multiple runs with all of the programs running simultaneously, it's possible to extract a speed value for each of the programs in turn, under those conditions. The cumulative number isn't representative of how long it actually took, but it's more of a "score" on the performance under a given set of conditions.

Betwon - Saturday, December 31, 2005 - link

NO! It is the time(spend time) ,not the speed value.You see:

24.8s + 13.7s = 38.5s

42.8s + 42.2s + 46.6s + 65.9s = 197.5s

Anandtech's way is wrong.

Viditor - Saturday, December 31, 2005 - link

It's a score value...whether it's stated in time or even an arbitrary number scale matters very little. The values are still justified...

Betwon - Saturday, December 31, 2005 - link

You don't know how to test.But you still say it correct.

We all need the explains from anandtech.

Viditor - Saturday, December 31, 2005 - link

Then I better get rid of these pesky Diplomas, eh?

I'll go tear them up right now...:)

Betwon - Saturday, December 31, 2005 - link

I mean: You don't know how the anandtech go on the tests.The way of test.

What is the data.

We only need the explain from anandtech, but not from your guess.

Because you do not know it!

you are not anandtech!

Viditor - Saturday, December 31, 2005 - link

Thank you for the clarification (does anyone have any sticky tape I could borrow? :)What we do know is:

1. All of the tests were started simultaneously..."To find out, we put together a couple of multitasking scenarios aided by a tool that Intel provided us to help all of the applications start at the exact same time"

2. The 2 ways to measure are: finding out individual times in a multitasking environment (what I think they have done), or producing a batch job (which is what I think you're asking for) and getting a completion time.

Personally, I think that the former gives us far more usefull information...

However, neither scenario is more scientifically correct than the other.