IGP Power Consumption - 780G, GF8200, and G35

by Gary Key on April 18, 2008 2:00 AM EST- Posted in

- CPUs

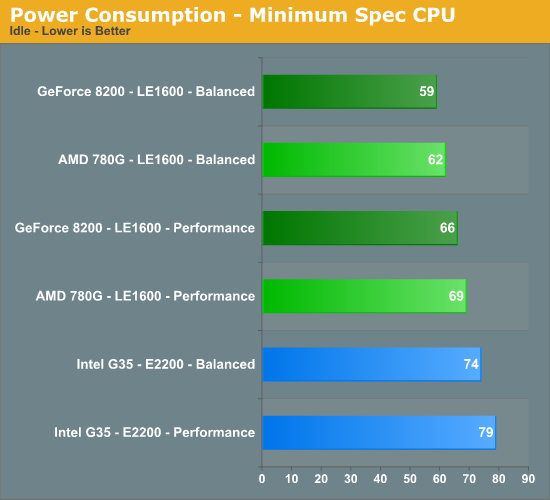

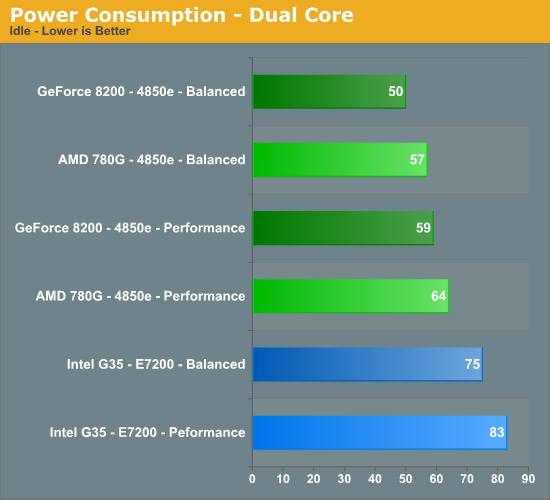

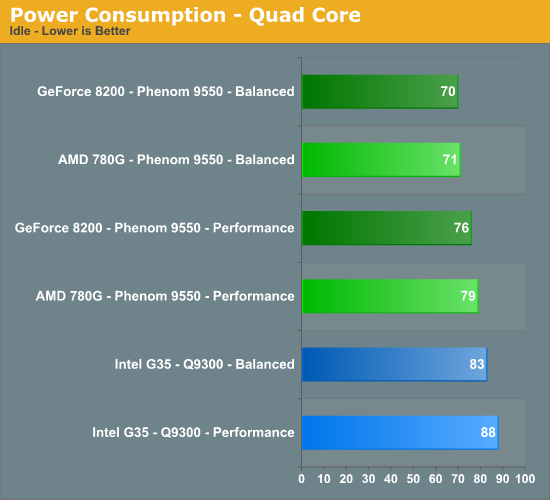

Idle Tests

We enabled the power management capabilities of each chipset in the BIOS and set our voltage selections to auto except for memory. We set memory to 1.90V to ensure stability at our timings. The boards would default to 1.80V or 1.85V, but we found 1.90V necessary for absolute stability in our configurations at the rated 4-4-4-12 timings.

On the two AMD boards, this resulted in almost identical settings with the exception being chipset voltages, although those were within a fraction of each other. Overall, each of the CPUs hovered around 1.250V and all power management options functioned perfectly on these particular board choices. We then set Vista to use either the Performance or Balanced profiles depending on test requirements.

We typically run our machines with the balanced profile. Using the Power Savings setting resulted in a decrease of 1W to 5W depending on the CPU and application tested. At idle, the Balanced and Power Savings profiles both set the minimum value for processor power management to 5%, while the maximum is set to 100% for Balanced and 50% for Power Savings. The Performance setting sets both values to 100% and is the reason for the increases in power requirements even if you have power management turned on in the BIOS.

The

results surprised us - more like floored us. The same company that brings you

global warming friendly chipsets like the 680i/780i has suddenly turned a new

leaf, or at least saved the tree it came from. We see the GeForce 8200 besting

the AMD 780G and Intel G35 platforms in our minimum spec configuration utilizing

the Power Saving profile by 3W and 15W respectively. To be fair to Intel, we

are comparing a single core AMD processor to a dual-core processor. However,

these are the minimum CPUs we would utilize. (4/19/08 Update - Minimum Spec chart is correct now)

Frankly, the AMD LE1600 is just on the verge of not being an acceptable processor during HD playback. The LE1600 was able to pass all of our tests, but the menu operation was slow when choosing our movie options and CPU utilization did hit the upper 90% range on some of the more demanding titles even with the 780G or GF8200 providing hardware offload capabilities.

The pattern changes slightly with the dual-core setup having a 7W and 25W advantage for the GF8200. Our quad-core results are almost even with the GeForce 8200 board from Biostar having only a 1W difference compared to the Gigabyte 780G setup. The GeForce 8200/Phenom 9550 combo comes in with a 13W advantage over the Q9300 on the ASUS G35 board.

44 Comments

View All Comments

wjl - Sunday, April 27, 2008 - link

If you own a decent mainboard and processor (meaning anything newer than AMD K7 or Intel P4), I think it's mostly the power supply which can help with efficiency.Building my AMD X2 3800+ EE into a bigger case with an 80+ certified power supply (and 90+ is coming soon as well, according to DigiTimes) helped reducing the idle power cosumption from 89W (with one hard disk and TV card) to some 74W (now with two hard disks and TV cards).

New main boards and single platter hard disks may help, but it's normally better to look at the weak points of your current systems IMHO.

hian001 - Wednesday, May 22, 2013 - link

Penetration Testing A standard penetration test is a way for scientists to get an idea of how resistant the soil in a certain area is to the invasion of water. The tests conducted on the sample are usually unaffected by the disturbance of the soil. <a href="http://www.breaksec.com/">Penetration Testing</a>computerfarmer - Wednesday, April 23, 2008 - link

It is good to see this kind of information. Lower power consumption has its place considering the cost, those that pay the bills will understand. I am looking forward to the 780G roundup, if it includes the 8200, that's good too.Keep up the excellent work.

bingbong - Tuesday, April 22, 2008 - link

Ok Thanks to those who said it is available. I will try the vendors again. I have only been looking online because I tried to buy it a couple of times. Actually I am in Taiwan so the availability is usually pretty good.l8r

sheh - Monday, April 21, 2008 - link

This recent power efficiency trend is something I definitely like. At this rate, and with Atom coming, it might be possible to run a decent general-purpose computer on a <200W PSU.*cleans up the AT PSU*

smilingcrow - Sunday, April 20, 2008 - link

I’ve tested a couple of systems with different Gigabyte G35 boards with various 65nm C2Ds and idle power consumption averaged around 55W with a spec similar to yours; only 2x1GB of RAM though. SPCR tested the exact same Asus board and managed no worse than 56W at idle although that was with a lesser spec and older less efficient E6400. This makes me wonder how you managed to record 84W at idle with an E2200 when I managed 52.5W with an E2140!wjl - Sunday, April 20, 2008 - link

Yeah, exactly. My wife's machine (G35, E8200, 2Gig RAM, and a Samsung F1 750) draws some 69W on idle when in Gnome / Debian Lenny - and that is reported to take more than anything M$. The power supply used here is an EarthWatts (Seasonic) 500W 80+, which seems to be ok even at those low levels.My own one for comparison: AMD X2 3800+ EE on Nvidia 6150/430, 3Gig, 2x250Gig, 380W EarthWatts 80+ - takes also 69 Watts in its best moments (Gonme / Debian Etch, which doesn't have something like cpufreqd yet), tho here the "average" idle is more like 75W.

cheers,

wjl

smilingcrow - Monday, April 21, 2008 - link

I emailed the author about the G35 power data as it seemed disproportionately high and was skewing the results; he didn’t reply but the G35/E2200 at idle data has now been reduced from 84 to 74W with no reference being made to the change!smilingcrow - Monday, April 21, 2008 - link

In a previous article by the same author http://www.anandtech.com/cpuchipsets/showdoc.aspx?...">http://www.anandtech.com/cpuchipsets/showdoc.aspx?... he looked at the same Asus G35 & Gigabyte 780G boards but compared them against an Asus GF8200 board and made the following comment:“As far as power consumption goes during H.264 playback, the AMD platform averaged 106W, NVIDIA platform at 102W, and the Intel platform averaged 104W - too close to really declare a true winner.”

The same CPUs were used so some consistency might be expected between that review and the current one, but if the power data for H.264 decoding is compared we get this:

Old / New / Difference (Watts)

GF8200 – 102 / 77 / 25

780G – 106W / 84 / 22

G35 – 104W / 103 / 1

Somehow the G35 platform seemed to gain 21 to 24W of power consumption compared to the two AM2+ platforms. Is this purely down to the higher bit-rate of the movie tested in the current review showing up the inefficiency of the G35 platform or is this another anomaly in the G35 power data!

deruberhanyok - Saturday, April 19, 2008 - link

Interesting article but I'd like to echo some thoughts already posted. Seeing the power numbers is great but without any performance context to them (or comparisons being kept to same brand / models as close as possible) it's hard to see exactly where this all fits in.Also, low power boxes would be perfectly content running off of something like a Seasonic S12II-330. It could make a difference in overall power usage as well. Silent PC review recently discussed this in their Sparkle 250W 80 plus PSU review.

As others are, I'm looking forward to seeing the full roundup. Thanks for all the hard work!